Security for embedded products isn’t a feature you bolt on at the end. It’s a set of decisions baked into your architecture from day one. Most product teams know they need “security” but struggle with where to start and how much is enough. Connected devices are shipping into hostile environments, regulations are tightening, and a single vulnerability can turn a successful product into a liability.

This guide gives you a framework for thinking about embedded product security: what you’re actually protecting, where threats come from, and how to make proportional security investments.

What Are You Actually Protecting?

Most teams jump straight to “we need encryption” without first identifying what they’re defending. Before choosing mechanisms, get clear on your assets. Embedded products typically have four categories worth protecting:

Firmware and IP. Your application code, proprietary algorithms, calibration data, and configuration logic. If a competitor or counterfeiter extracts your firmware, they can clone your product without the R&D investment. In markets like medical devices or industrial controls, firmware represents years of domain-specific engineering.

Data in transit. Sensor readings, commands, credentials, and telemetry moving over a network. When an industrial controller sends a command to open a valve, or a medical device transmits patient data to the cloud, interception or manipulation of that data can cause physical harm. An attacker who can inject commands into a control system doesn’t need to be in the building.

Data at rest. Cryptographic keys, certificates, user data, and configuration stored on the device itself. A debug port left enabled or unprotected flash memory can expose every secret on the device. Once keys are extracted, they can be used to impersonate devices, decrypt communications, or forge firmware updates across your entire fleet.

Device identity. The ability to prove that a device is genuine, not cloned, spoofed, or tampered with. Cloned medical devices running unvalidated firmware have caused patient safety incidents. Spoofed industrial sensors have fed false data into control systems.

Where Threats Come From

Embedded devices don’t live in locked data centers. It’s bolted to a wall in a factory, installed in a vehicle, or sitting on someone’s desk.

Physical access. Debug ports like JTAG and SWD, flash memory readout, bus sniffing, and side-channel attacks. Devices deployed in the field are physically accessible to anyone with a screwdriver and basic tools. An exposed JTAG header is an open invitation to dump firmware, extract keys, or flash malicious code. Even “tamper-resistant” packaging only slows a motivated attacker.

Network interfaces. Bluetooth, WiFi, cellular, Ethernet, CAN bus, Modbus, or any other communication channel your device uses is an attack surface. Each protocol carries its own class of vulnerabilities. A device with a Bluetooth pairing weakness can be compromised from the parking lot. An unencrypted Modbus interface on an industrial network treats every command as trusted.

Supply chain. Contract manufacturers, component suppliers, firmware distribution channels, and logistics providers all touch your product before it reaches the end user. A compromised component, tampered firmware image, or counterfeit part introduced during manufacturing can undermine every other security measure you’ve built. This threat is easy to overlook because it happens before your device is “yours.”

The threat model for a home thermostat looks nothing like the threat model for an industrial controller. Proportionality matters. Over-engineering security wastes BOM cost and development time. Under-engineering it creates liability.

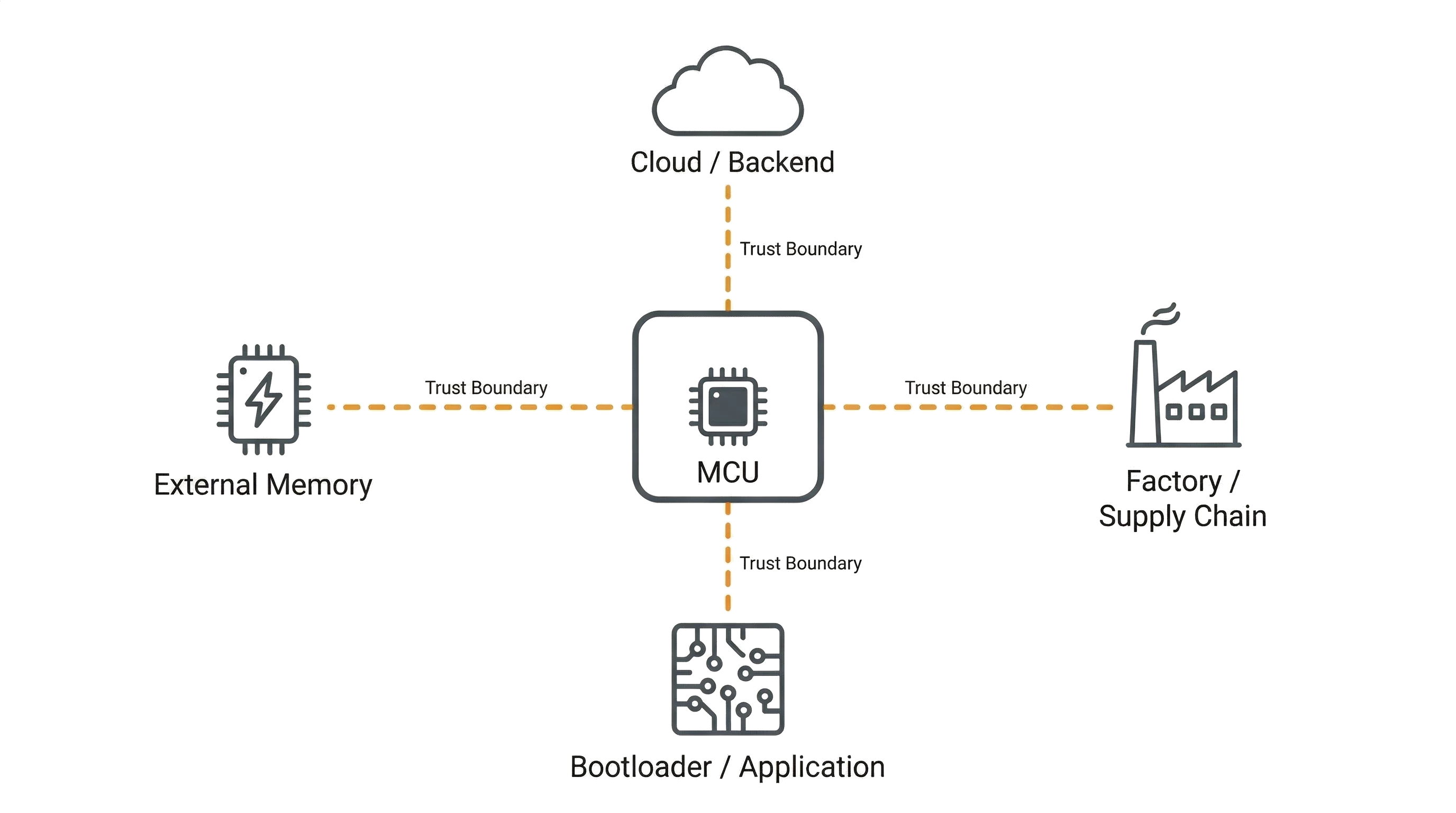

Trust Boundaries

The most useful security concept for product developers is the trust boundary. A trust boundary is where data or control crosses from a domain you trust to one you don’t.

Every trust boundary in your system needs a security mechanism: authentication, encryption, signature verification, or physical tamper detection. You don’t need to secure everything equally. Identify your boundaries, then invest proportionally.

Common trust boundaries in embedded products:

- Between your MCU and external memory. If firmware or sensitive data lives on an external flash chip, can that chip be physically swapped or read out? If yes, that’s a trust boundary that needs encryption or authentication.

- Between your device and the cloud. Can messages be spoofed, replayed, or intercepted? Mutual authentication and encrypted channels address this boundary.

- Between the bootloader and application firmware. Can the application be replaced with unsigned code? This is exactly what secure boot solves, and it’s one of the most critical boundaries in any embedded product.

- Between the factory and the field. Can devices be tampered with during manufacturing, shipping, or installation? Secure provisioning and hardware attestation protect this boundary.

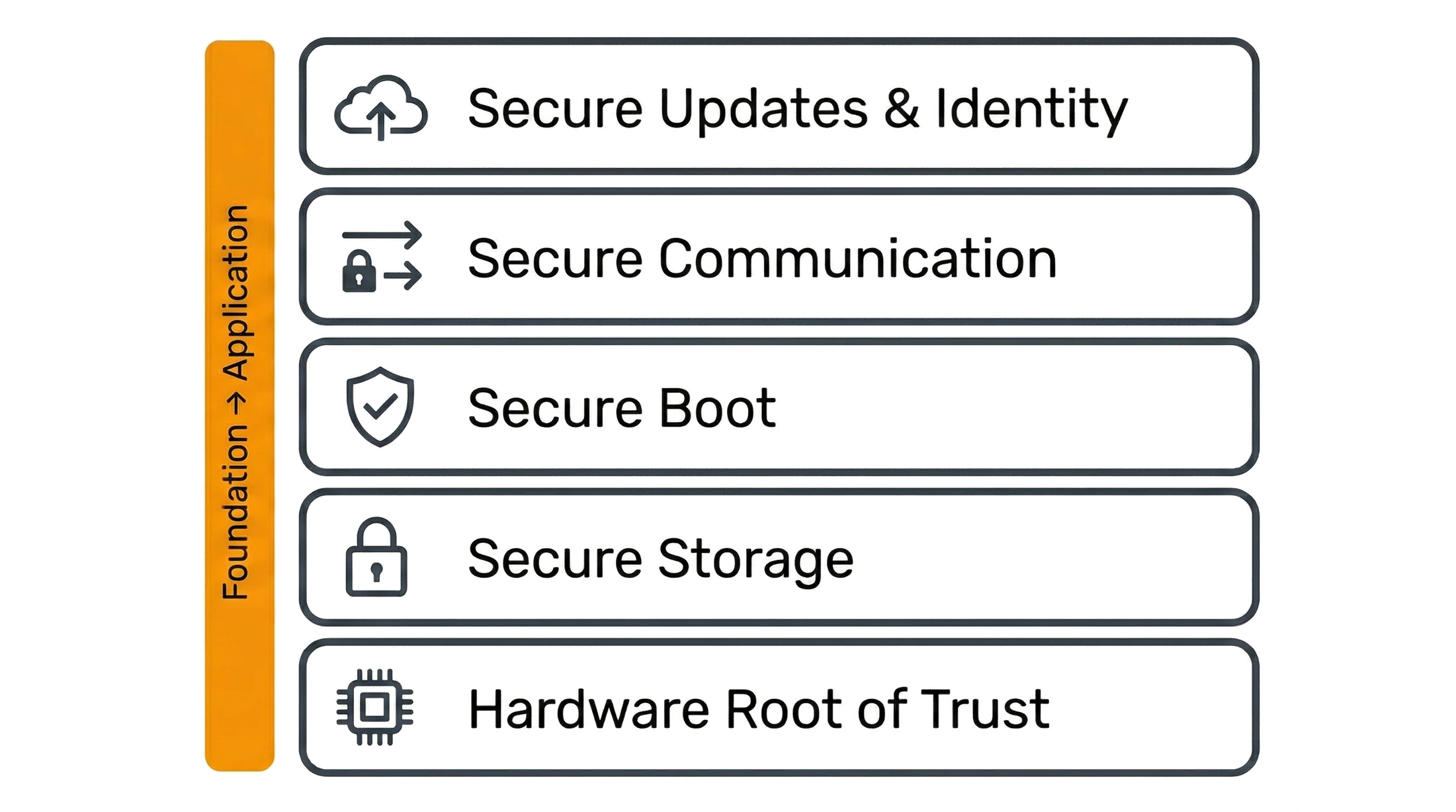

Building Your Security Architecture

Practical embedded security is built in layers, each one reinforcing the others. Start from the foundation and work up:

Hardware root of trust. Everything starts with MCU selection. Look for secure boot support, hardware key storage, and crypto acceleration. The root of trust is the one component you have to get right, because every other layer depends on it. See our guide to secure boot for a deeper look at this foundation.

Secure storage. Protecting keys, credentials, and sensitive data on-device. This means secure elements or trusted platform modules for high-value keys, encrypted flash regions for sensitive data, and anti-readout fuses to prevent debug extraction. Even with physical access to the board, extracting secrets should be impractical.

Secure communication. TLS or DTLS for network traffic, authenticated protocols for local buses, and proper certificate management. The crypto is straightforward; the key and certificate lifecycle is where teams get stuck. How do devices get their initial certificates? How are they rotated? What happens when one is compromised?

Secure updates. Signed and encrypted over-the-air firmware updates with rollback protection. Your update mechanism is both a critical security feature and a potential attack vector. A compromised update pipeline can push malicious firmware to your entire fleet. We built exactly this kind of secure OTA system for an automotive ECU.

Device identity and authentication. Unique per-device certificates, mutual authentication with backend services, and anti-cloning measures. Every device needs a unique identity injected during manufacturing, which means your provisioning process has to support it from day one. We’ve built identity systems like this in our biometric authentication work.

How Much Security Is Enough?

The right level of security depends on your deployment context, threat environment, and what’s at stake.

Low risk - simple peripheral, no network connectivity, physically secure environment. Basic code protection (flash readout protection, debug port lockdown) may be sufficient. Don’t over-invest.

Medium risk - connected device with OTA updates, deployed in semi-accessible locations. You need secure boot, encrypted communications, signed firmware updates, and a key management strategy. This is where most IoT products land.

High risk - safety-critical systems, regulated industries, or high-value targets. Hardware root of trust, dedicated secure element, mutual authentication, physical tamper detection, and formal threat modeling. Standards like IEC 62443 (industrial), FDA premarket cybersecurity guidance (medical), ETSI EN 303 645 (consumer IoT), and NIST IoT guidelines provide specific requirements for these contexts.

Common Mistakes

We see the same mistakes across projects:

- Treating security as a late-stage add-on. Security is architectural. Retrofitting secure boot onto a product that wasn’t designed for it often means a hardware respin.

- Hardcoding keys or credentials in firmware. If it’s in the binary, it’s extractable. Every device needs unique credentials provisioned during manufacturing.

- Securing the network but ignoring physical access. TLS doesn’t help if someone can dump your firmware through an unprotected debug port.

- Over-engineering for the threat model. A $5 sensor doesn’t need the same security architecture as a medical infusion pump. Wasted BOM cost and development time add up.

- Under-investing in key management. The crypto is the easy part. Generating, distributing, storing, rotating, and revoking keys across a device fleet is an operational challenge that most teams underestimate.

Getting the Architecture Right

The security decisions you make during architecture, MCU selection, and system design determine what’s possible at every stage after. Adding hardware security features after a board is in production means a redesign. Choosing a provisioning strategy after manufacturing is underway means reworking your factory process.

Getting this right early saves months of rework later. If you’re working through these decisions for an upcoming product, our embedded security engineering services can help.